Documentation Index

Fetch the complete documentation index at: https://docs.labelbox.com/llms.txt

Use this file to discover all available pages before exploring further.

Foundry apps help automate data labeling and enrichment. Here, we should how to use the Labelbox SDK to manage Foundry apps and how to use a Foundry app to predict and import annotations into a dataset.

Create a Foundry app

A Foundry app (short for Foundry application) helps automate data labeling and enrichment. Once you’ve created a Foundry app, you can run it repeatedly against new data.

To create a Foundry app, use the Labelbox Model to create your Foundry app.

Once the app is created, you can use the Labelbox SDK to run your app and manage its results.

Run a Foundry app

You can use the Labelbox Model to run Foundry apps. Results are displayed like any other model run.

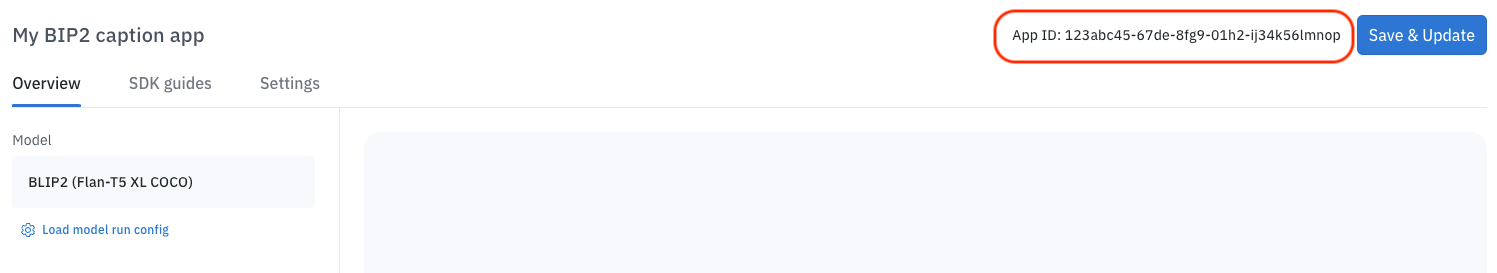

When running a Foundry app, you must provide an app ID. You can find the app ID by going to the Model tab and selecting Apps. When you select a Foundry app, the app ID will appear in the top right corner of the screen.

In addition to the app ID, the method also requires a DataRowIdentifiablesobject and a unique name.

task = client.run_foundry_app(model_run_name=f"Amazon-{str(uuid.uuid4())}",

data_rows=lb.GlobalKeys(

[global_key] # Provide a list of global keys

),

app_id=AMAZON_REKOGNITION_APP_ID)

task.wait_till_done()

print(f"Errors: {task.errors}")

#Obtain model run ID from task

MODEL_RUN_ID = task.metadata["modelRunId"]

Send Foundry annotations to Annotate

When you send predictions to Annotate from Catalog, you may choose to include or exclude certain parameters.

Parameters

| Parameter | Required? | Description |

|---|

predictions_ontology_mapping | Required | A dictionary containing the mapping of the model’s ontology feature schema IDs to the project’s ontology feature schema IDs. See Send predictions to Annotate for more information on where to access your predictions map. |

exclude_data_rows_in_project | Optional | Excludes data rows that are already in the project. |

override_existing_annotations_rule | Optional | The strategy defines how to handle conflicts in classifications between the existing data rows in the project and incoming predictions from the source model run or annotations from the source project. Options include: -ConflictResolutionStrategy.KeepExisting (DEFAULT) -ConflictResolutionStrategy.OverrideWithPredictions -ConflictResolutionStrategy.OverrideWithAnnotations |

batch_priority | Optional | Assigned priority to the batch of data rows (1-5). |

Sample script

model_run = client.get_model_run("<model_run_id")

send_to_annotations_params = {

"predictions_ontology_mapping": PREDICTIONS_ONTOLOGY_MAPPING,

"exclude_data_rows_in_project": False,

"override_existing_annotations_rule": ConflictResolutionStrategy.OverrideWithPredictions,

"batch_priority": 5,

}

task = model_run.send_to_annotate_from_model(

destination_project_id=project.uid,

task_queue_id=None, #ID of workflow task, set ID to None if you want to convert pre-labels to ground truths or obtain task queue id through project.task_queues().

batch_name="Foundry Demo Batch",

data_rows=lb.GlobalKeys(

[global_key] # Provide a list of global keys from foundry app task

),

params=send_to_annotations_params

)

task.wait_till_done()

print(f"Errors: {task.errors}")

Example: Run and import annotations from a Foundry app

Regardless of what model you use from Foundry, the workflow through the SDK is similar. Step 6 (Map ontology through the UI) is where the process can slightly differ.

An interactive tutorial is also available as a Colab notebook; it shows shows how to run and import annotations from a Foundry app.

Before you start

You will need to import these libraries to use the code examples in this section.

import labelbox as lb

from labelbox.schema.conflict_resolution_strategy import ConflictResolutionStrategy

import uuid

Replace with your API key

API_KEY = ""

client = lb.Client(API_KEY)

Step 1: Import data rows into Catalog

You must have data rows in Catalog before you can run them through Foundry. In this example, we are using an image data row.

# send a sample image as data row for a dataset

global_key = str(uuid.uuid4())

test_img_url = {

"row_data":

"https://storage.googleapis.com/labelbox-datasets/image_sample_data/2560px-Kitano_Street_Kobe01s5s4110.jpeg",

"global_key":

global_key

}

dataset = client.create_dataset(name="foundry-demo-dataset")

task = dataset.create_data_rows([test_img_url])

task.wait_till_done()

print(f"Errors: {task.errors}")

print(f"Failed data rows: {task.failed_data_rows}")

Step 2: Set up ontology

Your project should have an ontology configured the tools and classifications supported for your model and selected data type.

To illustrate, Amazon Rekognition only supports object detection scenarios. Consequently, your ontology must include a bounding box annotation. for your ontology since it only supports object detection.

# Create ontology with two bounding boxes that is included with Amazon Rekognition: Car and Person

ontology_builder = lb.OntologyBuilder(

classifications=[],

tools=[

lb.Tool(tool=lb.Tool.Type.BBOX, name="Car"),

lb.Tool(tool=lb.Tool.Type.BBOX, name="Person")

]

)

ontology = client.create_ontology("Image Bounding Box Annotation Demo Foundry",

ontology_builder.asdict(),

media_type=lb.MediaType.Image)

Step 3: Create a labeling project

Connect the ontology to the labeling project.

project = client.create_project(name="Foundry Image Demo",

media_type=lb.MediaType.Image)

project.connect_ontology(ontology)

Step 4: Create new Foundry app & copy App ID

To use the Labelbox app to create Foundry apps:

From the main menu, select Model and then select App from the Create menu.

Select Amazon Rekognition, name your Foundry app, and then select Proceed.

Customize your parameters and then select Save & Create.

In Model, select *Aps and then select your new Foundry app.

Use the Copy button to copy the App ID to the clipboard.

Paste the copied App ID into your code:

#Select your foundry application inside the UI and copy the APP ID from the top right corner

AMAZON_REKOGNITION_APP_ID = "<App ID goes here>"

Step 5: Run Foundry app on data rows

This step generates annotations that can later be reused as pre-labels or ground in a project.

task = client.run_foundry_app(model_run_name=f"Amazon-{str(uuid.uuid4())}",

data_rows=lb.GlobalKeys(

[global_key] # Provide a list of global keys

),

app_id=AMAZON_REKOGNITION_APP_ID)

task.wait_till_done()

print(f"Errors: {task.errors}") v

#Obtain model run ID from task

MODEL_RUN_ID = task.metadata["modelRunId"]

Step 6: Map ontology through the UI

Predictions may not directly map to ontology features. When this happens, you need to map predictions to your ontology. To do this using the Labelbox app:

Use Catalog to select the dataset for your model run and then select the data rows Select Select all in the top right corner.

Select Manage selection > Send to Annotate.

Select your project from the Project menu.

When sending annotations for Annotate review, you typically select a workflow step. This isn’t necessary for this example.

Place a checkmark next to Include model predictions and then select Map.

Select the incoming ontology and matching ontology features for Car and Person.

When the features are mapped, select Copy ontology mapping as JSON.

Paste your copied JSON into the definition of PREDICTIONS_ONTOLOGY_MAPPING# Copy map ontology through the UI then paste JSON here

PREDICTIONS_ONTOLOGY_MAPPING = {}

In a production workflow, you would typically save your configuration. You can skip this step for the sake of this example.

Step 7: Send annotations from Catalog to Annotate

model_run = client.get_model_run(MODEL_RUN_ID)

send_to_annotations_params = {

"predictions_ontology_mapping": PREDICTIONS_ONTOLOGY_MAPPING,

"exclude_data_rows_in_project": False,

"override_existing_annotations_rule": ConflictResolutionStrategy.OverrideWithPredictions,

"batch_priority": 5,

}

task = model_run.send_to_annotate_from_model(

destination_project_id=project.uid,

task_queue_id=None, #ID of workflow task, set ID to None if you want to convert pre-labels to ground truths or obtain task queue id through project.task_queues().

batch_name="Foundry Demo Batch",

data_rows=lb.GlobalKeys(

[global_key] # Provide a list of global keys from foundry app task

),

params=send_to_annotations_params

)

task.wait_till_done()

print(f"Errors: {task.errors}")