Annotate is the data labeling platform within Labelbox. It allows your organization to label data with any human workforce at any scale.Documentation Index

Fetch the complete documentation index at: https://docs.labelbox.com/llms.txt

Use this file to discover all available pages before exploring further.

How Annotate works

When it comes to deciding how to label your data, you have the following options:- Outsource this task to a labeling service — these external teams receive training on the specific labeling tasks required and quickly proceed to label large datasets (see Workforce).

- Import your model predictions as pre-labels to speed up the labeling process (see Model-assisted labeling.

- Rely on your own internal team of labelers to label your dataset (see Labeling editors).

Customizable labeling editor

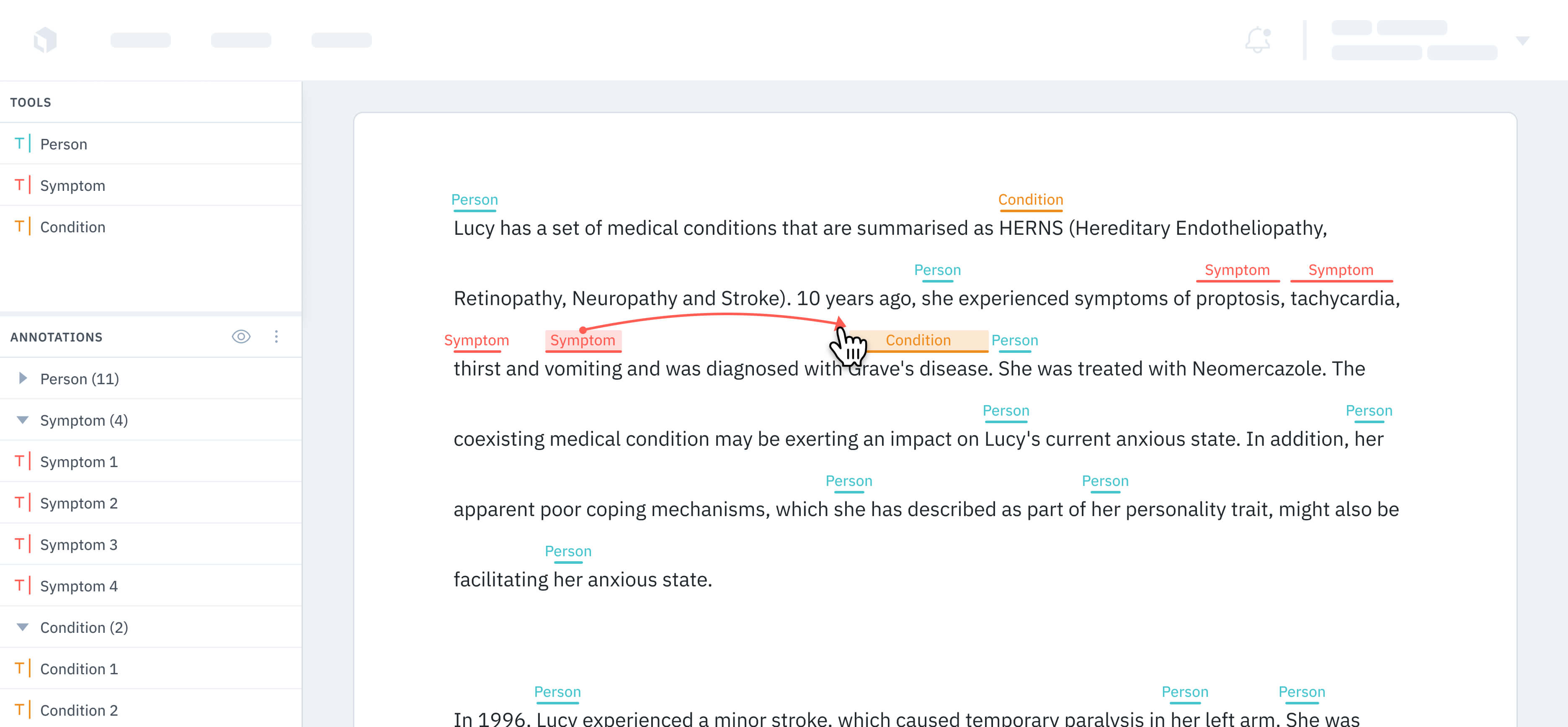

The editor is the labeling interface purposefully designed to be highly configurable. The editor is the primary tool for creating, viewing, and editing annotations. The labeling editor supports the following media types out of the box:Images

Video

Text

Documents

Geospatial

Audio

Conversational text

HTML

LLM human preference

Prompt and response generation

Multimodal chat evaluation

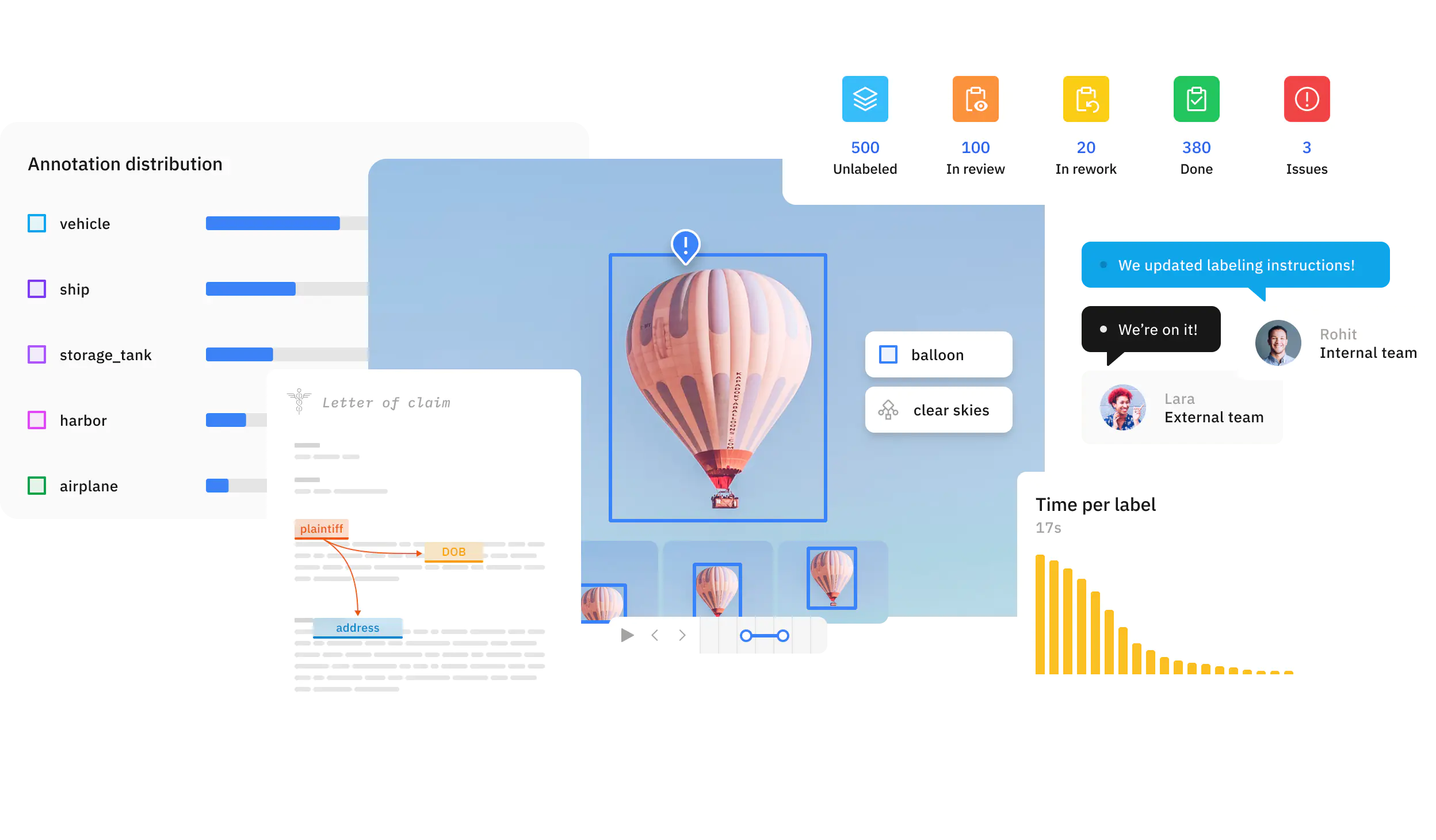

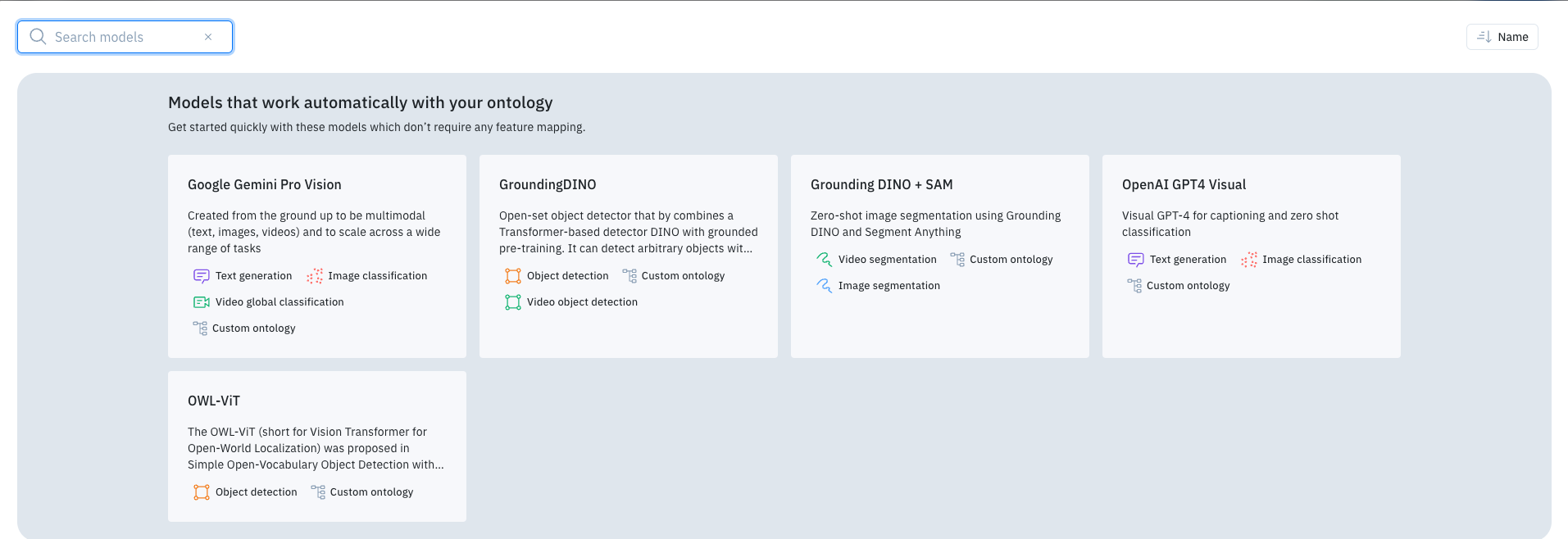

Model Assisted Labeling with Foundry

Users can use Foundry to add tools or classifications onto data rows as an auto-labeling feature. This is set up when a project has data to label and an editor with a set ontology. Once completed, users can enable the Model Assisted Labeling tool and configure an LLM to create annotations.

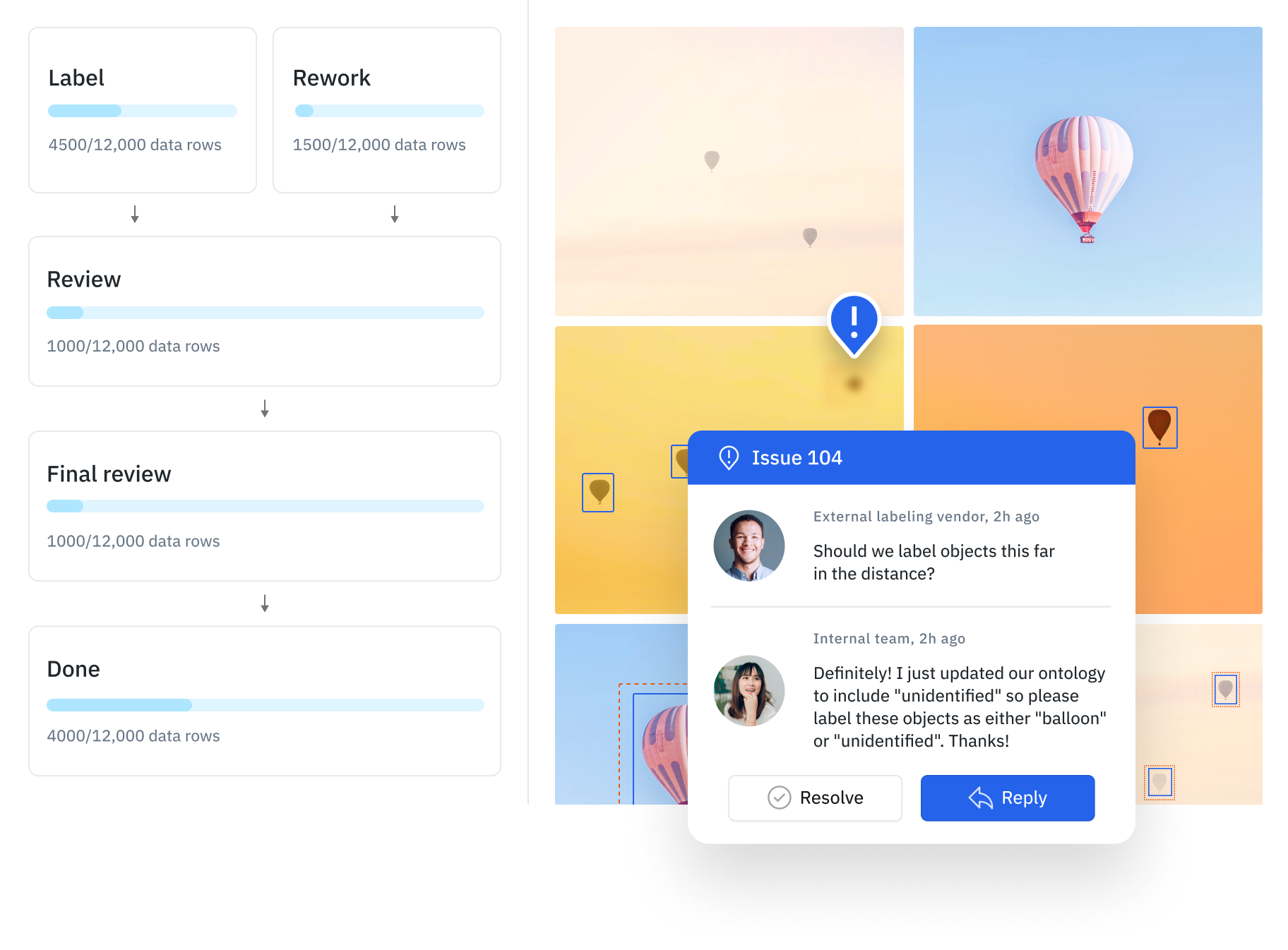

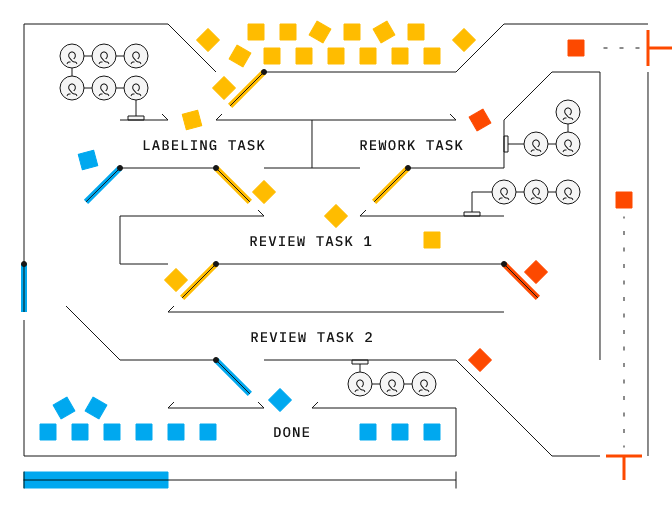

Customizable labeling & review settings

You can set up customized review steps based on your decided quality strategy in your project’s Workflow tab. As you work with large, complex projects, having to review all labeled data rows becomes increasingly time-consuming and expensive.

Issues & comments

Benchmarks

Consensus

Performance dashboard

Labeling workforce

Powered by Labelbox’s data engine, you can leverage Labelbox labeling services to collaborate with an external labeling workforce in real time and produce high-quality data while leveraging AI and automation techniques to keep human labeling costs at a minimum. To learn more, see Labeling services